what is entropy in machine learning

Discover the essence of entropy in machine learning in just four steps, unlocking its power in data science.

In the world of machine learning, understanding the concept of entropy is crucial for making informed decisions about data and model performance. Entropy is a fundamental concept borrowed from information theory that helps measure uncertainty and disorder in datasets.

In this blog post, we'll break down entropy in machine learning into four simple steps, allowing you to grasp its significance and how it's applied in various aspects of the field.

Understanding Entropy in Machine Learning in 4 Steps:

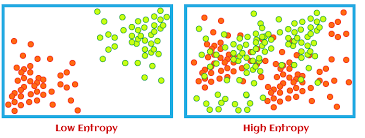

Step 1: What is Entropy? Entropy is a measure of uncertainty or disorder in a dataset. In machine learning, it quantifies how mixed or random the labels or classes in a dataset are. High entropy indicates high disorder or randomness, while low entropy suggests a more organized and predictable dataset.

credit: freepik

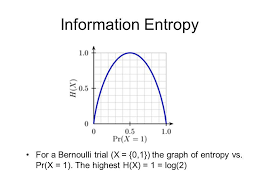

Step 2: Calculating Entropy: To calculate entropy, you'll need to consider the distribution of class labels in your dataset. For a binary classification problem, the formula is typically -p log2(p) - (1-p) log2(1-p), where 'p' represents the proportion of one class in the dataset. For multi-class problems, the formula is a bit more complex, involving the sum of -p_i * log2(p_i) for each class 'i'.

credit: javaTpoint

Step 3: Information Gain: Entropy plays a critical role in decision tree algorithms. Information gain, a metric used in decision trees, measures the reduction in entropy achieved by splitting a dataset on a particular feature. The goal is to maximize information gain, as it helps create more informative and accurate decision trees.

credit: Analytics Vidya

Step 4: Entropy in Feature Selection and Model Evaluation: Entropy is also used in feature selection and model evaluation. Features with high information gain are preferred for splitting datasets, as they contribute more to reducing uncertainty. In model evaluation, entropy-based metrics like cross-entropy are used to assess the performance of classification models.

Conclusion:

Entropy is a foundational concept in machine learning, serving as a tool for quantifying uncertainty and aiding decision-making. By understanding how entropy works and its applications in various aspects of machine learning, you can make better-informed choices when it comes to data preprocessing, feature selection, and model evaluation.

It's a concept that empowers data scientists and machine learning practitioners to create more accurate and efficient models in their pursuit of knowledge and insights from data.