what is regularization in machine learning

Regularization in machine learning acts as a safeguard against overfitting, ensuring models excel in real-world scenarios.

In the ever-evolving realm of machine learning, the pursuit of building accurate and robust models often comes with a perilous pitfall—overfitting. It's a challenge that every data scientist encounters at some point.

Thankfully, regularization emerges as the knight in shining armor, offering a powerful solution to combat overfitting while improving the generalization of machine learning models. In this article, we'll delve into the world of regularization, exploring what it is, why it's essential, and how it works its magic in the field of machine learning.

credit: freepik

What is Regularization in Machine Learning?

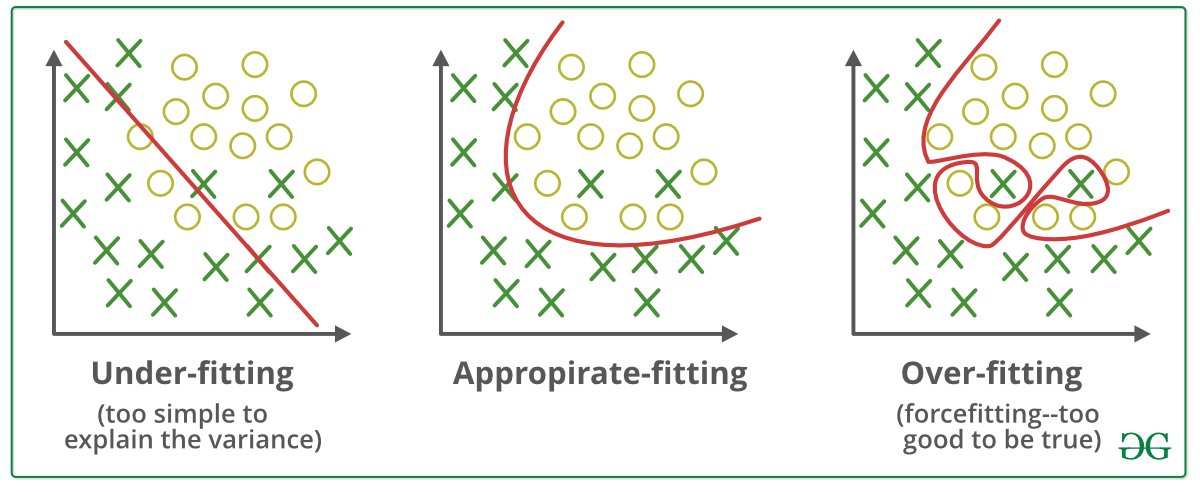

Regularization is a set of techniques employed in machine learning to prevent models from fitting the training data too closely, which can lead to overfitting. Overfitting occurs when a model learns not only the underlying patterns in the data but also the noise and randomness, resulting in poor performance on unseen data. Regularization methods introduce constraints or penalties to the model during training, discouraging it from becoming overly complex and encouraging a better balance between fitting the training data and maintaining generalization.

credit: Analytics Vidya

Types of Regularization Techniques:

In the world of machine learning, there are two primary types of regularization techniques:

- L1 Regularization (Lasso): L1 regularization adds the absolute values of the model's coefficients as a penalty term to the loss function. This encourages sparsity in the model, effectively selecting a subset of the most important features while setting others to zero.

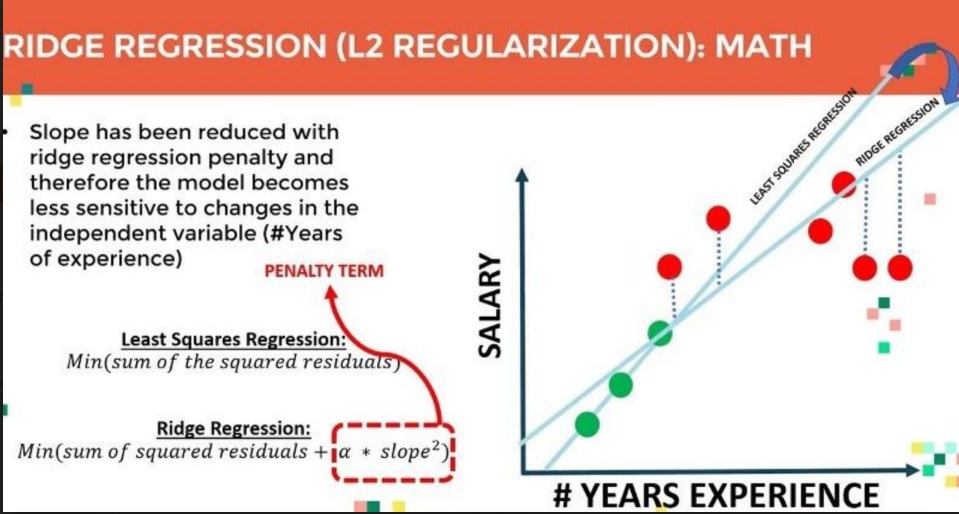

- L2 Regularization (Ridge): L2 regularization adds the square of the model's coefficients as a penalty term. It discourages extreme values in the coefficients and tends to distribute the importance more evenly across all features.

Why Regularization is Essential:

Regularization serves as a crucial tool in the machine learning toolbox for several reasons:

- Preventing Overfitting: As mentioned earlier, the primary role of regularization is to prevent overfitting, ensuring that a model generalizes well to unseen data.

credit: Medium article by Amod Kolwalker

- Feature Selection: Regularization techniques like L1 can automatically perform feature selection by driving some feature coefficients to zero. This simplifies the model and reduces the risk of multicollinearity.

- Enhancing Model Stability: Regularization can make models more stable by reducing the variance in their predictions, leading to more reliable and consistent results.

Conclusion: Regularization in machine learning is a potent strategy to strike a balance between model complexity and performance. It acts as a guardian against overfitting, ensuring that your machine learning models don't get lost in the intricacies of the training data. By introducing penalties and constraints, regularization helps models generalize better, resulting in more robust and accurate predictions.

So, the next time you venture into the world of machine learning, remember the importance of regularization. It's a key element in building models that not only perform well on your training data but also excel when faced with new, unseen challenges—a valuable asset in the ever-evolving landscape of artificial intelligence.